Your Laptop Is a Dumb Terminal: Designing Devices for the Intelligence Era

Open your laptop right now. What do you see? A grid of icons. A file manager that hasn't fundamentally changed since 1984. A search box that searches strings. A taskbar full of windows you'll Alt+Tab between for the next four hours.

Then look at what you actually do with it. Most of you have Claude or ChatGPT open in a browser tab, doing the work the laptop used to do.

Your laptop has become a dumb terminal for AI.

That isn't a metaphor. It's a literal description of where the intelligence is and where it isn't. The model in the cloud understands you. The device on your lap doesn't.

Two eras, two architectures

The personal computer was designed for the classical computing era. The job-to-be-done was straightforward: organize files, run applications, search documents, fire off keystrokes. The OS was a coordinator of human activity.

Two design primitives ran the show:

- Search — the user formulates a query, the system returns matching strings

- Tasks — the user drives every step; the OS provides windows, menus, and shortcuts to make manual work faster

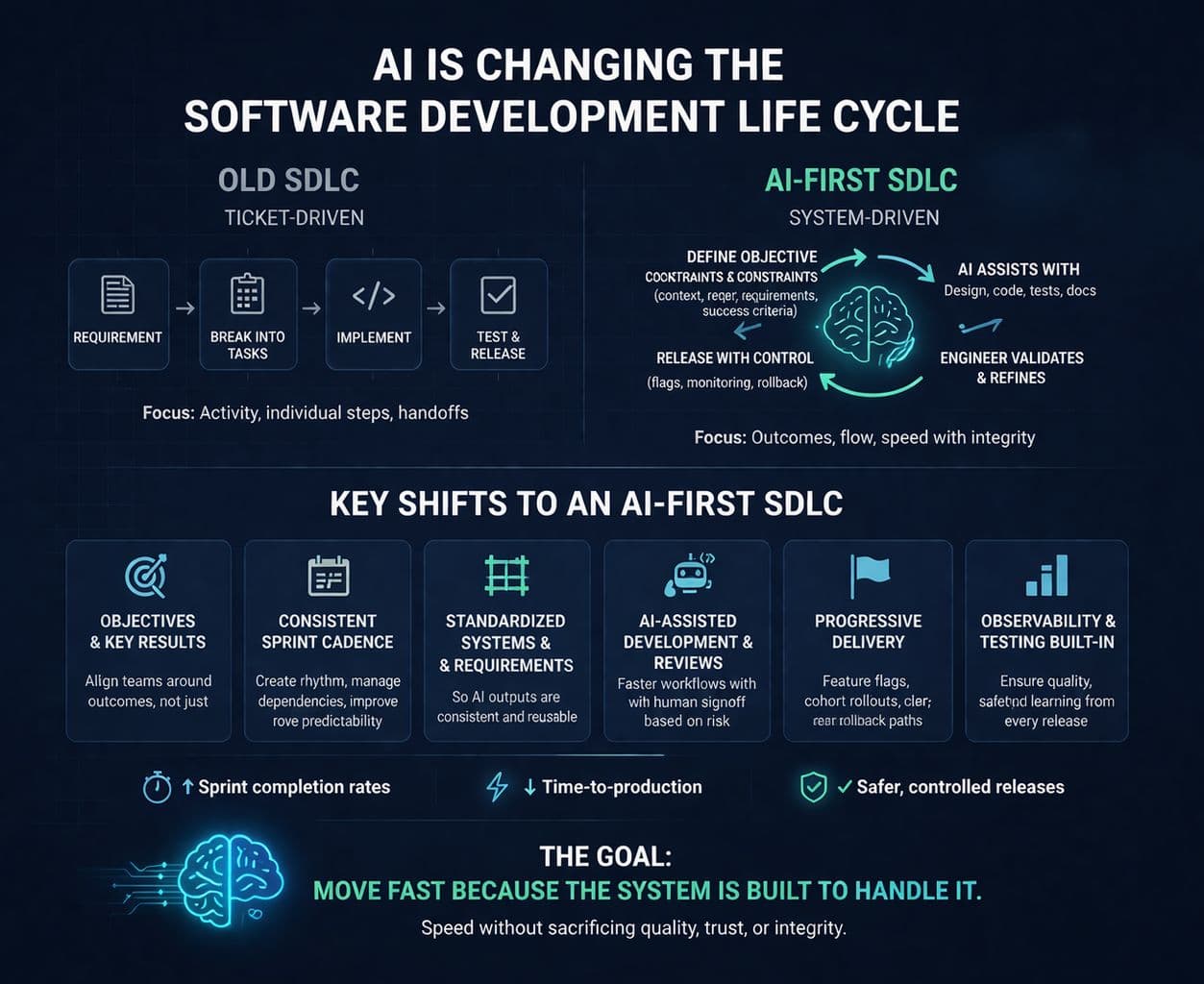

That architecture is twenty years stale. The intelligence era replaces both primitives:

- Search becomes understanding — you describe an outcome and the system reasons about what you need

- Manual tasks become agents — you delegate intent and an agent executes the steps, calling tools as needed

Same goals, fundamentally different control surface. And no current laptop or phone is built for it.

What's already changing — and why it isn't enough

Apple Intelligence. Microsoft Copilot+ PCs. Qualcomm Snapdragon X with NPUs. Google's Pixel AI features. Every OEM is shipping a story about "AI on the device."

But strip away the marketing and you'll notice something: they've added compute without changing the interaction model. Copilot+ ships with the same Start menu and File Explorer it had ten years ago. Apple Intelligence is grafted onto an OS that still pivots around the home screen grid.

This is the same mistake Rabbit and Humane made — but in reverse. Those companies tried to redesign the hardware without rebuilding the OS, and they shipped brilliant-looking devices that nobody could actually use. The OEMs are now doing the inverse: upgrading the silicon while preserving an interaction model from the typewriter era.

Both halves have to change together.

What dies in the intelligence era

The artifacts of classical computing that survive purely out of inertia:

- The file manager. You don't think in folders. You think in the thing I was working on with Sarah last week. An intent layer replaces hierarchical storage.

- The search box. You won't type "meeting notes Q2 marketing" — you'll say "what did we decide about the Q2 launch?" and get an answer, not links.

- The app grid. Apps are units of capability for the OS to draw on, not destinations for the user to visit. The user's entrypoint is the agent.

- Notifications. Today they fire-hose you. In the intelligence era, an agent triages them and only surfaces what crosses your threshold.

- Keyboard shortcuts. A relic of needing to be faster than the GUI. When you have an agent, you don't need shortcuts — you have intent.

That is not a smaller list. It is the entire affordance layer of the device.

What gets built in their place

- An intent layer, not a task layer — the OS exposes capabilities (send, summarize, schedule, find) and the agent composes them on demand

- A context hub that any agent can reason about — your calendar, your conversations, your location, your active projects, what you were just looking at — all queryable, all permissioned, all local-first

- Local-first NPUs that run agents on-device for privacy and latency, with cloud reach only when the task warrants it

- Always-on ambient sensors that give the agent context (where you are, who you're with, what you're looking at) without you having to ask

- Ambient displays instead of windows — surfaces that show you what an agent is doing without forcing you to manage the UI

This is not a "smarter Mac." It is a different kind of object.

A prediction

Here's the one I'd bet on: In five years, no one will type a search query. Search bars will look as quaint as floppy disk icons.

You'll describe an outcome ("get me on the next flight to SFO that lands before 3 pm and let my driver know") and a constellation of agents will negotiate the steps. You'll never touch the airline's app. You'll never open your calendar. The "device" disappears as a thing you operate, and reappears as a thing that operates on your behalf.

The OEM that ships this first wins the next twenty years.

The OEMs still selling NPUs as a feature on top of Windows 11 are going to look, in retrospect, like Sun selling faster terminals during the rise of the personal computer.

Share this post